© 2026 | All Right Revered.

Want to explore how AI is shaping and shaking up the business world?

Marketing Strategist

A data-driven marketer passionate about empowering businesses through informed decision-making.

A few years ago, if you mentioned “AI” in everyday talk, you’d likely have to clarify it meant artificial intelligence. Jump to 2023, and that’s no longer the case. Now, you speak about artificial intelligence, and you will enter a conversation with someone who is a little scared about losing their job to a machine.

So, what is artificial intelligence? Simply put, AI, or artificial intelligence, is like the brainpower of technology. It uses smart analysis and logical techniques to understand what’s happening, helping to make decisions and take actions automatically. In simpler terms, it’s the tech that makes machines smart decision-makers. Let’s dig a bit deeper to understand the key terms in artificial intelligence.

As defined by Gartner, artificial intelligence, or AI, involves applying advanced analysis and logic-based techniques, including machine learning (ML), to interpret events, support decision-making, and automate actions. This definition recognizes the evolving nature of AI technologies, highlighting the common integration of probabilistic analysis, where probability and logic converge to navigate uncertainty.

It’s important to acknowledge that different organizations and individuals may hold varying interpretations of artificial intelligence due to its diverse applications. The absence of a universal descriptor underscores the manifold ways in which AI can enhance human activities, coupled with its capacity to learn and act autonomously.

To effectively leverage the potential of artificial intelligence, your organization requires a well-defined strategy. This involves articulating and achieving consensus on a widely accepted definition that aligns with your specific AI goals. While embracing diverse perspectives, leaders in business, IT, and data and analytics must find common ground on what AI means for the organization. Without this shared understanding, crafting a strategy that maximizes the benefits of AI becomes a complex task.

It’s worth noting that AI technology vendors may present their own interpretations of the term. When engaging with them, seek detailed explanations on how their offerings align with your expectations for how artificial intelligence can deliver tangible value to your organization. These discussions are valuable opportunities to refine and solidify your organization’s unique perspective on AI.

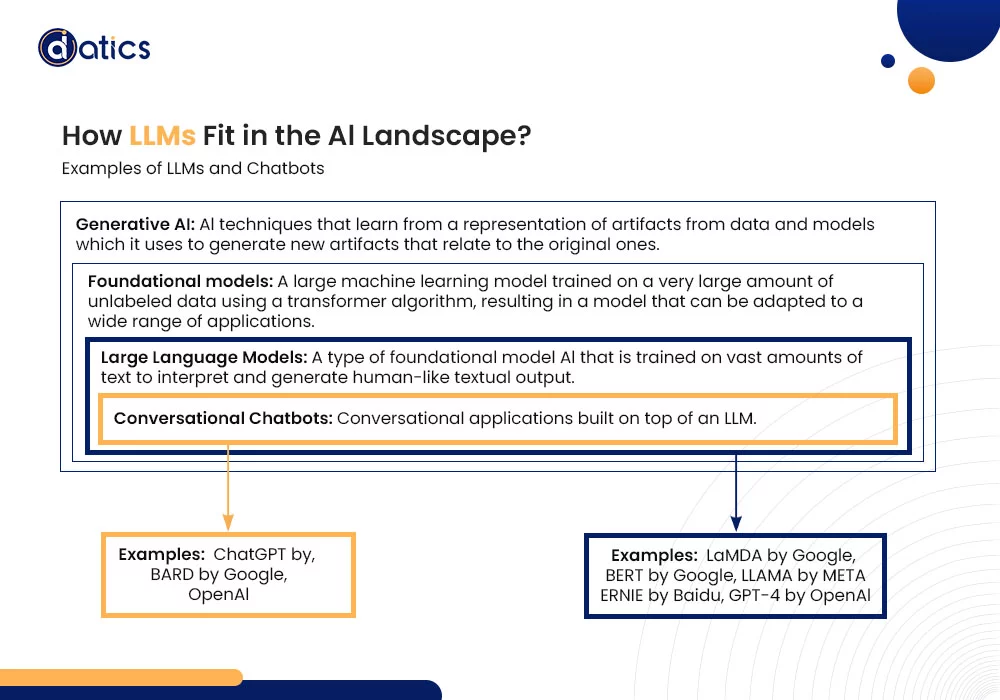

Large language models (LLMs) represent a fascinating breakthrough in artificial intelligence, with their roots firmly planted in the realm of text generation. The spotlight on LLMs intensified when OpenAI’s ChatGPT made its debut in November 2022, creating waves in how we generate content.

These models undergo training on large datasets, often encompassing billions of words sourced from simulations or public/private data collections. This extensive training equips LLMs with the ability to interpret textual inputs and craft responses that mimic human-like language. Already, LLMs play a pivotal role in assisting search engines to comprehend queries and formulate coherent answers.

Advancements in large language models (LLMs) have the power to reshape business operations by automating tasks once handled by humans—think code generation and answering questions. This marks a pivotal shift, offering new avenues for efficiency and innovation in the business landscape.

Machine learning is a crucial tool for AI problem-solving. Unlike human learning, machines store and process information in complex ways. It analyzes data with mathematical models, uncovering patterns and knowledge that may be hard for humans to spot.

It’s purely analytical, using models to find insights in data. While it suggests actions, it doesn’t make decisions without human input. Machine learning creates formulas turning data into useful results. Through training, models learn patterns in data, providing new insights and predictions without explicit programming. Many successful AI applications rely on machine learning.

Now, deep learning, a type of machine learning, goes deeper. It uses layers to extract knowledge from raw data, gradually solving more complex problems with better accuracy and less manual tweaking.

Some focus only on machine learning and deep learning, overlooking other valuable approaches in AI. This can slow or stall AI initiatives, especially if machine learning alone faces challenges.

Machine learning often demands lots of well-labeled data, making it tricky for companies with smaller datasets or budget constraints. Using machine learning, including deep learning, for predictions lets AI automate choices, removing the need for constant human decisions.

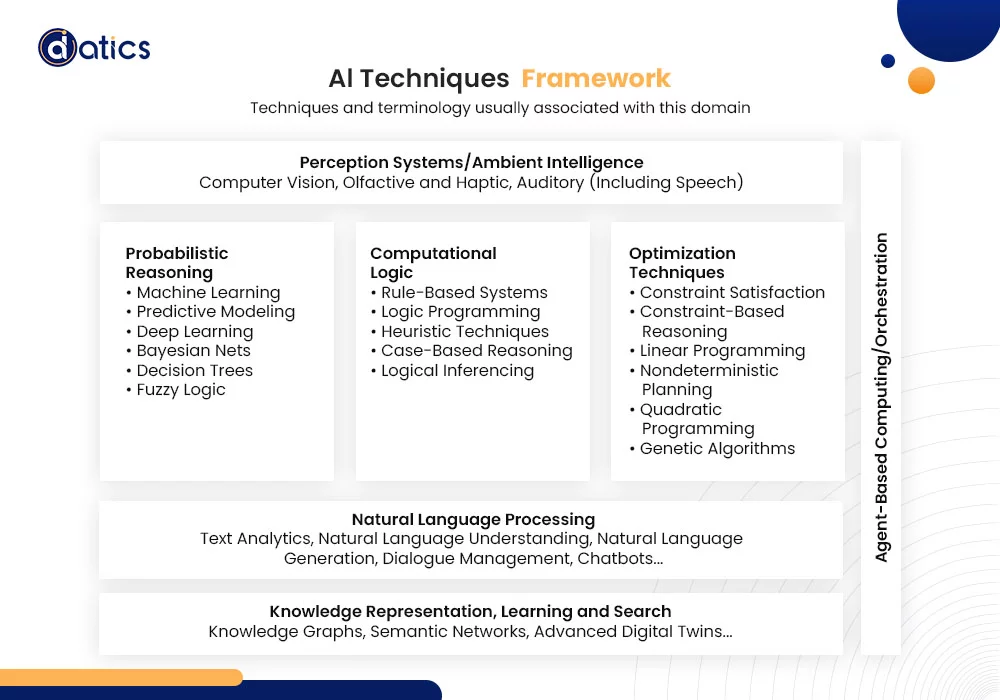

Artificial Intelligence applications commonly rely on three main categories of well-established techniques:

Often associated with machine learning, these techniques extract valuable insights from vast datasets. They uncover unknown knowledge by revealing correlations linked to specific goals or labels within the data. For instance, a machine learning approach might analyze customer records, identifying factors and unveiling how these factors are correlated. This capability enables organizations to anticipate potential churn among customers, illustrating the practical impact of probabilistic reasoning in AI applications.

Also known as rule-based systems, these techniques leverage the organization’s implicit and explicit knowledge. They capture established knowledge in structured rules, ensuring coherence and avoiding contradictions. Business professionals can manipulate these rules, and the technology ensures the consistency of the rule set, preventing contradictions or circular reasoning—especially crucial when dealing with a large number of rules. Compliance laws have recently brought rule-based approaches to the forefront, highlighting their relevance and effectiveness in ensuring adherence to regulatory requirements.

Traditionally used by operations research groups, optimization techniques maximize benefits while managing business trade-offs. They achieve this by finding optimal combinations of resources within specified constraints and a designated timeframe. These techniques, sometimes described as prescriptive analytics, not only identify optimal plans of action but have also been extensively utilized for decades.

Operational research groups in asset-centric industries, such as manufacturing and utilities, as well as functions like logistics and supply chain management, rely on these techniques to achieve efficient outcomes. Their role in striking the right balance between various factors makes them indispensable in optimizing business processes and decision-making.

Following are the key emerging AI techniques, ranked by maturity:

NLP facilitates intuitive communication between humans and systems. It involves computational linguistic techniques for tasks like recognizing, parsing, interpreting, tagging, translating, and generating natural languages.

This involves tools like knowledge graphs or semantic networks to enhance access and analysis of data networks and graphs. These mechanisms, with their representations of knowledge, prove to be more intuitive for specific types of problems. The adoption of knowledge graph techniques has seen rapid growth in the last three years.

While less mature than established AI techniques, agent-based computing is gaining popularity. Software agents are persistent, autonomous, goal-oriented programs that act on behalf of users or other programs. Examples include chatbots, which are becoming increasingly popular.

In the current landscape, two main classes of agent applications are commonly used:

These can be generic, like meeting scheduling assistants in email systems, or more specific, such as contract validation softbots for sales automation applications.

Serving functions like automatic temperature-setting, found in car diagnostic systems or home thermostats.

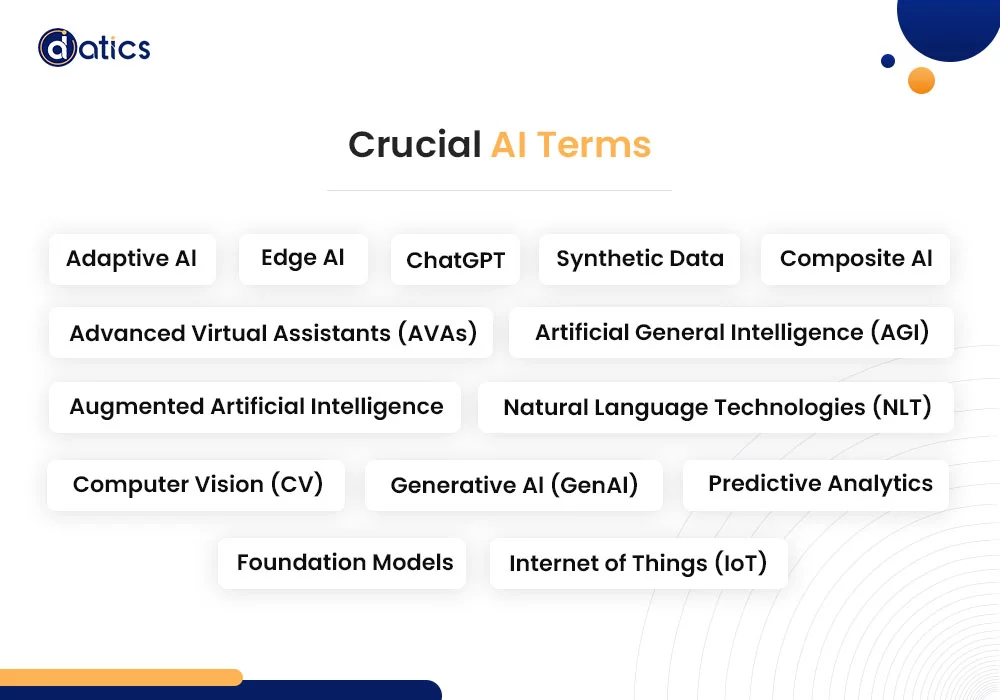

Adaptive AI enables post-deployment model behavior changes by learning behavioral patterns from both past human and machine experiences. This adaptability, honed within runtime environments, facilitates a swift response to evolving real-world circumstances.

Advanced Virtual Assistants, or AVAs, also known as conversational AI agents, process human inputs with sophistication. They execute tasks, deliver predictions, and offer decisions. AVAs leverage advanced user interfaces, natural language processing, and deep learning techniques, providing decision support, personalization, and possessing contextual and domain-specific knowledge.

AGI envisions a future of AI with the potential to understand or learn any intellectual task that a person can do. It represents a level of intelligence capable of versatile intellectual abilities.

Augmented AI, often referred to as “intelligent X,” signifies a trend where AI techniques go beyond their traditional functions, providing additional and untapped functionality. This augmentation extends the capabilities of AI systems.

ChatGPT is an OpenAI service integrating a conversational chatbot with Large Language Models (LLM) to create content. Trained on a foundational model derived from billions of words across multiple sources, ChatGPT underwent fine-tuning through reinforcement learning from human feedback.

Composite AI involves the combined application of different AI techniques to enhance learning efficiency. By broadening knowledge representations, organizations can solve a wider range of business problems in a more efficient manner.

Computer Vision (CV) is a process that captures, processes, and analyzes images from the real world, allowing machines to extract meaningful and contextual information from the physical environment. CV techniques come with distinct technology and infrastructure requirements differing from traditional Machine Learning (ML) approaches.

Edge AI refers to the incorporation of AI techniques in Internet of Things (IoT) endpoints, gateways, and edge servers. This integration extends to applications ranging from autonomous vehicles to streaming analytics, offering differentiated use cases for digital business.

Generative AI, or GenAI, learns from data to generate innovative content resembling but not replicating the original. This technology has the potential to create new forms of creative content, such as video, and expedite Research and Development (R&D) cycles across various fields, including medicine and product development.

Foundation models are large machine learning models initially trained on a broad set of unlabeled data. They are subsequently adapted to a wide range of applications through fine-tuning.

The IoT encompasses the network of physical objects (things) embedded with technology to sense or interact with their internal workings and the external environment. Not including general-purpose devices like smartphones, IoT examples range from smart plugs to driverless vehicles. Its functionality relies on a diverse array of IT endpoints and gateways, with data driving AI, especially for real-time responses in scenarios like autonomous vehicles.

Natural Language Technologies (NLT) are systems analyzing emotions and/or personality within text-based communications or surveys. These systems create emotional scoring tools, leveraging technologies like NLT, text analytics, convolutional neural networks, and recurrent neural nets.

Predictive analytics represents a sophisticated form of advanced analytics dedicated to scrutinizing data or content to predict future events. This analytical approach employs various techniques, including regression analysis, forecasting, multivariate statistics, pattern matching, predictive modeling, and forecasting. By leveraging insights derived from historical data, predictive analytics anticipates and foresees future outcomes, providing valuable foresight for informed decision-making.

Synthetic data is artificially generated through machine learning. While mirroring the statistical properties of real data, it avoids using identifying properties such as names and personal details. In the realm of AI, synthetic data emerges as a crucial source for large datasets, capable of modeling outlying scenarios while safeguarding sensitive and personal data.

Understanding these terms equips executives with valuable insights into the technological advancements shaping the business world.

Recent strides in generative AI, exemplified by innovations like ChatGPT, have ignited a newfound interest in artificial intelligence — transcending its role as a mere technology or business tool to become a ubiquitous product technology. The impact of AI on society is poised to rival transformative forces such as the internet, the printing press, or even electricity, heralding a comprehensive reshaping of society.

The following are some of the presumptions Gartner made regarding strategic planning for AI:

By 2026, organizations prioritizing AI transparency, trust, and security will witness a remarkable 50% enhancement in the adoption, achievement of business goals, and user acceptance of their AI models.

By 2026, enterprises embracing AI engineering practices to construct and manage adaptive AI systems will outperform their counterparts. They are anticipated to excel in both the quantity and efficiency of operationalizing AI models, demonstrating at least a 25% lead.

By 2027, the landscape foresees the acquisition of at least two vendors specializing in AI risk management functionality by enterprise risk management vendors, broadening the scope of offered functionalities.

By 2027, a regulatory intervention could manifest as at least one global company faces a ban on its AI deployment. The reason behind this regulatory action would be noncompliance with data protection or AI governance legislation. Such incidents underscore the growing significance of aligning AI practices with evolving legal and ethical frameworks.

Share the details of your project – like scope, timeframe, or business challenges. Our team will carefully review them and get back to you with the next steps!

© 2026 | All Right Revered.

Subheading : See how we achieved measurable results.

This guide is your roadmap to success! We’ll walk you, step-by-step, through the process of transforming your vision into a project with a clear purpose, target audience, and winning features.